At our household, a favorite game is to throw different grocery names at the Alexa shopping list and see what the NLQ interpreter comes up with. For many items, like “milk” or “sourdough bread”, Alexa does pretty well across the household. For others, not so much.

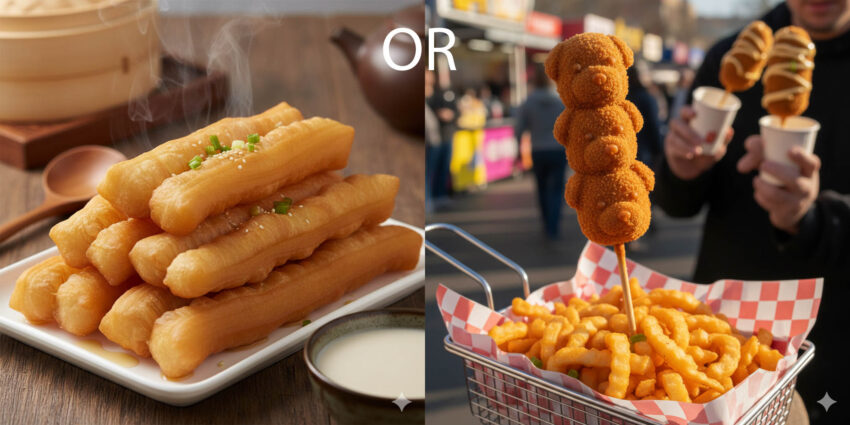

For example, “fried dough sticks”, which is an English term for 油条 (phonetically, “you tiao”), often ends up as “fried dog sticks”. “You tiao” invariably ends up as “Youtube”. I have no idea why I’d buy a “Youtube” at the local grocery. “Pea tips” ends up as “P. Tips”, though Alexa does get the alternate “Pea sprouts” correct. The Chinese name “dau miu” ends up as “double mute”.

This is not a slam on Alexa. The NLQ behind Alexa was obviously trained on a finite set of North American English food terms. Within that set, it’s amazing that it works as well as it does for a wide variety of voices. Outside of that set, well — the results speak for themselves.

This misidentification isn’t limited to text — there are numerous examples where FRT incorrectly labels individuals based on skin tone, because those systems were trained on data sets containing predominantly lighter skin-toned faces.

The point is that if you want your information system to give you meaningful results, you need to train and provide them with good information. This isn’t just a problem with training LLMs, but also in product management and corporations in general.

At a previous company, I experienced upper management who refused to send PMs to trade shows (other than to act as sales), and refused to pay for market research. The PMs were expected to obtain all their market intelligence purely through the Internet and cold or warm calls 1:1 with customers. That’s not to say PMs should not do that (they absolutely should), but there are far more cost-efficient ways to use PM time.

If you’re penny-pinching in the wrong places, don’t be surprised if your product launches as fried dog sticks.